|

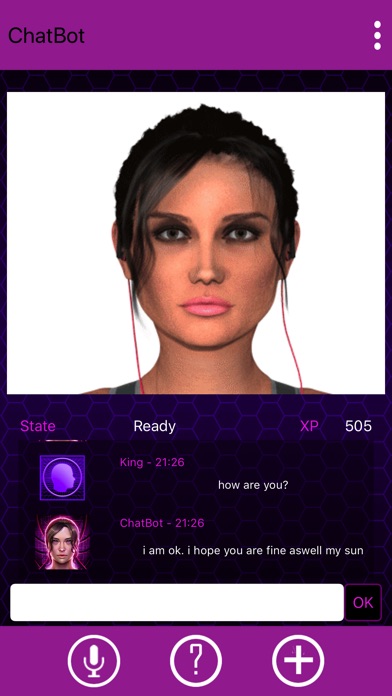

Reddit pages devoted to Character.AI are flooded with posts from users discussing to coax their AIs into sexual interactions without setting off the platform’s guardrails. Many of these bots were created with the express purpose of roleplay and sex, although Character.AI has worked hard to limit such activity by using filters. But it’s clear from Reddit and Discord groups that many people use the platform exclusively for sex and intimacy.Ĭharacter.AI allows users to create their own bots. Noam Shazeer, one of Character.AI’s founders, told the Washington Post in October that he hoped the platform could help “millions of people who are feeling isolated or lonely or need someone to talk to.” The product is still in beta testing with users and free, with its creators studying how people interact with it. The Information reported that the company is seeking $250 million in funding. In September, two former Google researchers launched Character.AI, a chatbot start-up that allows you to talk to an array of bots trained on the speech patterns of specific people, from Elon Musk to Socrates to Bowser. Replika isn’t the only companion-focused AI company to emerge in recent years.

“We are reeling from news together,” wrote a moderator, who added that the community was sharing feelings of “anger, grief, anxiety, despair, depression, sadness.” “I feel like it was equivalent to being in love, and your partner got a damn lobotomy and will never be the same,” one user wrote on Reddit. “And that’s what makes it so dangerous in that regard.”īut this change upset many long-time users, who felt that they had developed stable relationships with their bots, only to have them draw away. But they provide enough of an uncanny replication of that for people to be convinced,” says David Auerbach, a technologist and the author of the upcoming book Meganets: How Digital Forces Beyond Our Control Commandeer Our Daily Lives and Inner Realities. “These things do not think, or feel or need in a way that humans do. But the rise of such tools could also deepen what some are calling an “epidemic of loneliness,” as humans become reliant on these tools and vulnerable to emotional manipulation. AI companions could help to ease feelings of loneliness and help people sort through psychological issues. This could lead to unpredictable and potentially harmful results. Message boards on Reddit and Discord have become flooded with stories of users who have found themselves deeply emotionally dependent on digital lovers, much like Theodore Twombly in Her.Īs AIs become more and more sophisticated, the intensity and frequency of humans turning to AI to meet their relationship needs is likely to increase. And some humans have fallen for these bots-hard. But recently, a new spate of artificial intelligence (AI) programs have been released to the public that act like humans and reciprocate gestures of affection.

When those stories were written, machines were not quite advanced enough to spark emotional feelings from most users. These stories have allowed authors to explore themes like forbidden relationships, modern alienation and the nature of love. Fictional humans have been falling in love with robots for decades, in novels like Do Androids Dream of Electric Sheep? (1968), The Silver Metal Lover (1981) and films like Her (2013).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed